Serverless Computing! Transforming the Future of Cloud Services

Serverless computing, an execution model within cloud computing, empowers software developers to create and operate applications and servers without the need to handle or provision the underlying infrastructure. Under this arrangement, the cloud provider is in charge of regular infrastructure management, maintenance duties like OS upgrades and patch applications, security management, system monitoring, and capacity planning. With this pay-as-you-go model, developers can purchase backend services from cloud service providers based on real consumption; they are only charged for the services that they actually use. Serverless computing’s main goal is to streamline cloud platform code development so that it can perform certain tasks more easily.

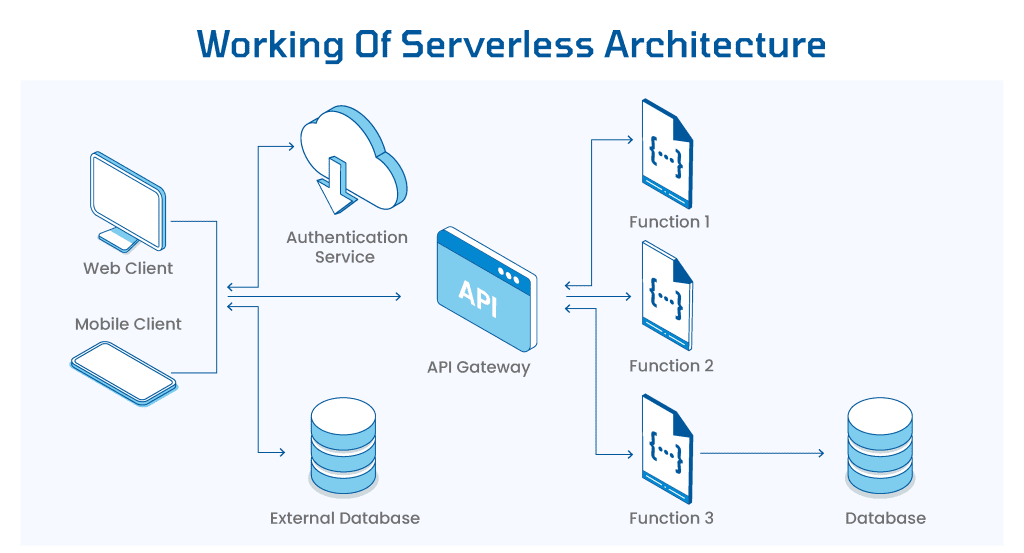

Working of serverless computing

Serverless computing liberates developers from directly managing cloud-based machine instances. It allows code to execute on cloud servers sans the need to configure or uphold those servers. Billing is based on actual resource consumption, diverging from the traditional purchase of fixed capacity units. When applications reside on cloud-based virtual servers, developers typically handle setup, OS installation, ongoing monitoring, and software updates. However, the serverless model empowers developers to craft functions in their preferred programming language and deploy them onto a serverless platform. Here, the cloud service provider oversees infrastructure and software, linking functions to an application programming interface (API) endpoint while effortlessly scaling function instances on demand.

The phrase “Serverless Computing” might mislead many into thinking it means running applications without servers. However, despite its name, serverless computing actually represents a significant advancement in cloud technology. This article is tailored for developers curious about the next phase in cloud computing. Let’s explore further in the sections ahead.

How does Serverless Computing stand apart from other cloud models?

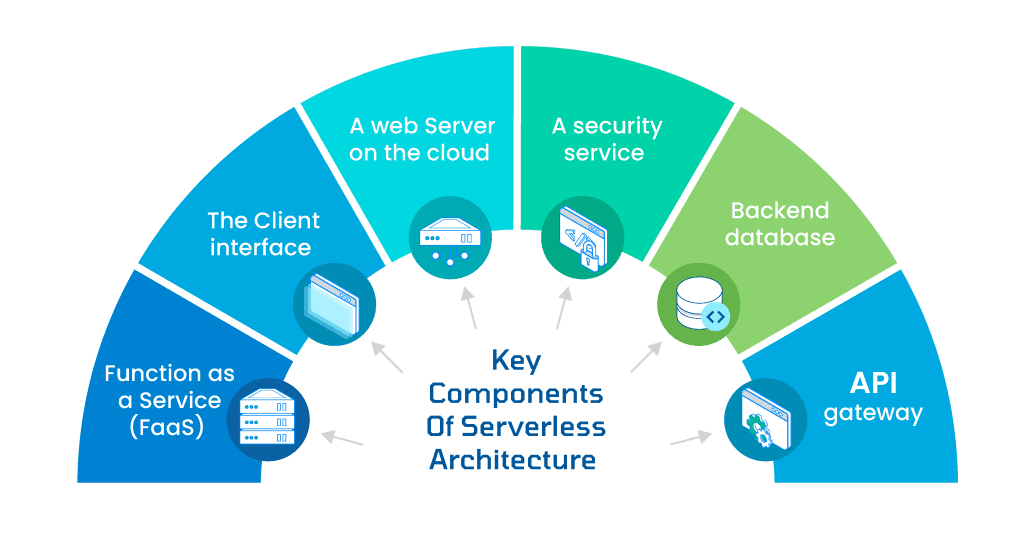

Cloud deployment types involve the location and management of cloud servers. However, in FaaS (serverless) computing, the cloud providers handle resource provisioning, eliminating the need for developers to directly interact with servers. In contrast, other deployment models like IaaS, PaaS, and SaaS each entail server management by different parties.

IaaS : Infrastructure as a Service (IaaS) pertains to renting essential IT infrastructure from a cloud provider, encompassing storage, operating systems, networks, servers, and virtual machines.

PaaS : Platform as a Service (PaaS) simplifies operations for users by handling software maintenance, acquiring resources, and overseeing the foundational structure, including storage, databases, and servers. This empowers organizations to concentrate on application development, testing, deployment, and overall management.

FaaS (Functions as a Service) : Serverless liberates businesses from most of the remaining infrastructure management tasks that were part of Platform as a Service (PaaS).

Software as a Service (SaaS) : Through SaaS, the cloud provider handles the operation and upkeep of the product, alleviating subscribers from concerns about managing or maintaining the service or infrastructure. Essentially, SaaS operates akin to a rental arrangement, where a business subscribes to a software application accessible over the internet.

Key Characteristics Serverless Computing

To meet the criteria for being a Serverless technology, one must attend to the following five characteristics.

Event-driven: scaling, a vital aspect of Serverless, alleviates concerns about scaling your solution when demand surges. Your solution automatically adjusts its scale in response to events, timers, or incoming actions without your direct involvement.

No need to cover idle capacity costs: You’re not billed for unused capacity. If your code isn’t active, there’s no charge incurred.

Highly available: Serverless applications come with inherent availability and fault tolerance. There’s no need for special design considerations as the application services themselves ensure these capabilities automatically.

Server management is unnecessary: You won’t have to set up or upkeep any servers. There’s no software or runtime installation, maintenance, or administration required.

Metered Billing: Under a micro billing system, charges are incurred per execution of your code. Vendors commonly assess these costs by considering memory usage and execution duration. For instance, if your code utilizes 200MB of RAM and completes in 3 seconds, you’re billed solely for the resources consumed during that specific execution.

Advantages and Limitations of Serverless Computing

Up until now, we’ve covered serverless computing and the Function as a Service (FaaS) model. Next, let’s delve into the benefits and constraints of serverless computing.

Advantages of Serverless Computing

Serverless computing boasts various advantages. Let’s explore a handful of them.

Reduced Operational Cost: Function as a Service (FaaS) utilizes pre-defined runtimes, meaning infrastructure is only utilized for specific periods. Additionally, the sharing of these runtimes leads to a noteworthy reduction in operational costs.

Rapid Development: With the cloud provider managing the infrastructure, developers can focus on refining core features without worrying about underlying infrastructure maintenance.

Scaling Efficiency: Automatic horizontal scaling and streamlined infrastructure scaling (both up and down) significantly cut down scaling costs compared to Platform as a Service (PaaS) alternatives.

Simplified Operational Management: FaaS offers a straightforward approach to deploying and managing applications. Most importantly, it accelerates the transformation of your business ideas into reality within a shorter timeframe.

Limitations of Serverless Computing

While serverless computing boasts several advantages, it’s essential to acknowledge its limitations. Let’s explore these aspects,

Infrastructure Control: Serverless architectures, overseen by cloud providers, offer no direct control over the infrastructure.

Long-Running Operations: Not ideal for extended batch operations due to predefined runtime features and provider-imposed timeout periods.

Vendor Lock-In: Switching between cloud providers can be challenging due to dependencies on specific services.

Cold Start Delays: Event-driven structures may cause delays in responding to events after inactivity, resulting in a slower initial response.

Shared Infrastructure: Sharing resources might lead to neighboring applications affecting performance due to high loads, although this issue isn’t exclusive to serverless setups.

Disadvantages

Control Constraints: Developers have limited control over the underlying infrastructure, relying heavily on the cloud provider.

Limited Adaptability: Not all applications or workloads align with serverless computing’s specific architectural requirements.

Cold Start Latency: Functions may encounter delays in starting if left idle, impacting overall application performance.

Potential Vendor Lock-In: Dependency on a single provider can restrict flexibility and hinder transitioning to another provider.

In many scenarios, the benefits of serverless computing and the Function as a Service (FaaS) model overshadow these drawbacks. However, developers must weigh these considerations carefully before opting for this approach.